Contact Us

Ready to Ship AI Systems to

Let's align architecture, execution, and delivery from day one.

Published On Mar 19, 2026

Updated On Mar 19, 2026

If your AI pilot worked but your production system didn't, you're not alone.

88% of companies are using AI, and only 39% can point to real results.

In other words, AI adoption is widespread, but measurable results are still uneven.

It rarely comes down to the model you chose. It comes down to how the system around it was designed.

This guide maps the AI development landscape in 2026, the technologies, architectures, and engineering approaches teams are using to finally bridge that gap.

Let’s get started.

For years, companies used AI in narrow ways, such as recommendation engines, fraud detection systems, and search ranking algorithms.

These systems delivered value, but they usually operated as isolated components inside larger applications.

That model is now changing.

AI is moving from standalone features to core software infrastructure. Instead of sitting on the edge of products, AI is becoming embedded directly into applications, workflows, and internal systems.

This shift is happening quickly.

Gartner predicts up to 40% of enterprise applications will include integrated task-specific agents by 2026, up from less than 5% today.

In practical terms, AI capabilities are now appearing across products as assistants, natural language interfaces, workflow automation tools, and decision-support systems.

The shift is not limited to products. It is changing how software gets built internally too.

Developers increasingly rely on AI tools for writing code, debugging, generating documentation, and reviewing pull requests.

Recent estimates suggest about 41% of code written is AI assited, with about half of developers using AI tools daily.

This means AI is becoming part of the standard development workflow, not just a productivity add-on.

Today organizations build shared internal platforms, often referred to as the AI factory model, that combine centralized data pipelines, common infrastructure, and reusable components.

This allows teams to build and deploy AI capabilities repeatedly across multiple products.

And to deploy the AI capabilities, it is important to understand the forces driving this shift.

Several shifts are reshaping how engineering teams build AI systems.

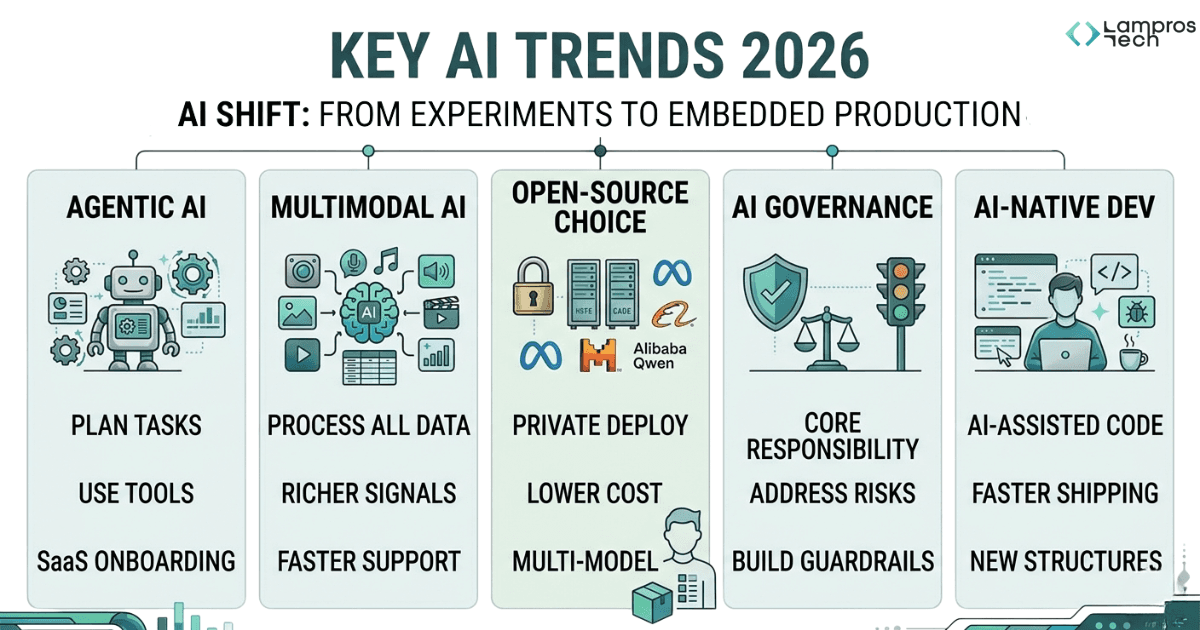

Five trends that are shaping the AI development landscape are agentic AI, multimodal systems, open source AI, governance AI, and AI native development workflows.

Together, these trends show how AI development is shifting from isolated experiments toward production systems embedded inside SaaS products and engineering environments.

Agentic AI systems can plan multi-step workflows, call external tools, interact with APIs, and execute actions with minimal human intervention.

For SaaS products, this enables a new generation of features where applications can

As a result, teams are increasingly exploring how agent-based systems can operate reliably inside production environments.

AI systems are no longer limited to text.

Modern models can process text, images, audio, video, and structured data within the same workflow, allowing applications to analyze richer signals across product interactions.

For example,

As multimodal models mature, SaaS products are evolving from simple AI assistants to systems that understand multiple forms of user input.

Until recently, most production AI systems relied on proprietary models.

Open-weight models from companies such as Meta, Mistral, and Alibaba’s Qwen are closing the performance gap with proprietary systems while allowing organizations to run AI on their own infrastructure.

For companies, this shift creates new options of:

As a result, many engineering teams now design systems that can work with multiple models rather than relying on a single vendor.

As AI systems move into production, governance is becoming a core engineering responsibility.

As, it introduces risks that traditional security models were not built to handle, including prompt injection, unintended automation, and exposure of sensitive data.

At the same time, regulatory oversight is increasing.

For SaaS platforms, this means building monitoring, guardrails, and evaluation mechanisms directly into AI architectures.

AI is also changing how software is built.

Engineers now use AI tools to write code, debug systems, generate documentation, and review pull requests.

Industry estimates suggest about 40% of new code is now written with AI assistance.

This shift allows smaller teams to ship features faster and experiment more quickly.

The biggest challenge now is to build systems, workflows, and governance structures that allow organizations to use those models effectively.

The next section looks at how the companies are shaping the AI landscape today.

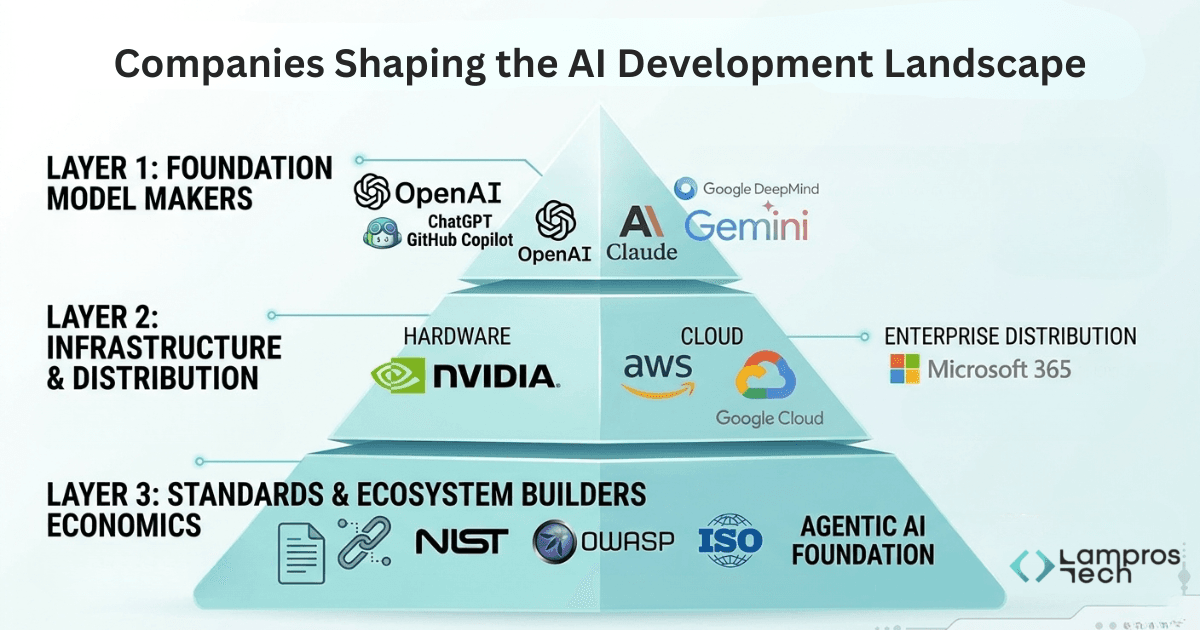

AI development today is not shaped by a single company or technology.

But, it is shaped by a small group of companies that control the core layers of the AI ecosystem.

Together, these companies influence how AI systems are built, deployed, and integrated into real products.

Understanding these layers helps explain why certain platforms and tools are becoming central to modern AI development.

The intelligence your stack runs on

Large language models and multimodal systems now power most AI applications, from copilots and assistants to automation tools and analytics systems.

The companies building these models therefore influence what developers can build.

For most organizations, these companies provide the intelligence layer that modern AI applications depend on.

The engine that powers and delivers AI systems

Building AI systems requires significant computing power.

The companies in this layer determine how quickly organizations can develop, deploy, and scale AI applications and at what cost.

This layer includes both hardware providers that power AI workloads and cloud platforms that deliver these capabilities to developers and enterprises.

Together, these infrastructure providers shape the economics and accessibility of AI development by influencing compute availability, deployment speed, and inference costs.

For many organizations, this infrastructure layer becomes the operating environment for their entire AI strategy.

The layer that makes AI systems interoperable

A third layer of the ecosystem focuses on how AI systems connect to software environments.

Standards bodies and open initiatives are beginning to define how AI systems should integrate with enterprise data, APIs, and governance processes.

Efforts such as:

Are shaping how AI systems are secured, audited, and connected to existing systems.

These standards are still maturing, but they're already influencing architectural decisions.

Teams building agent-based systems today are designing around MCP. Teams in regulated industries are building toward NIST and ISO compliance from day one.

Getting familiar with this layer and the tech stack used saves significant rework later.

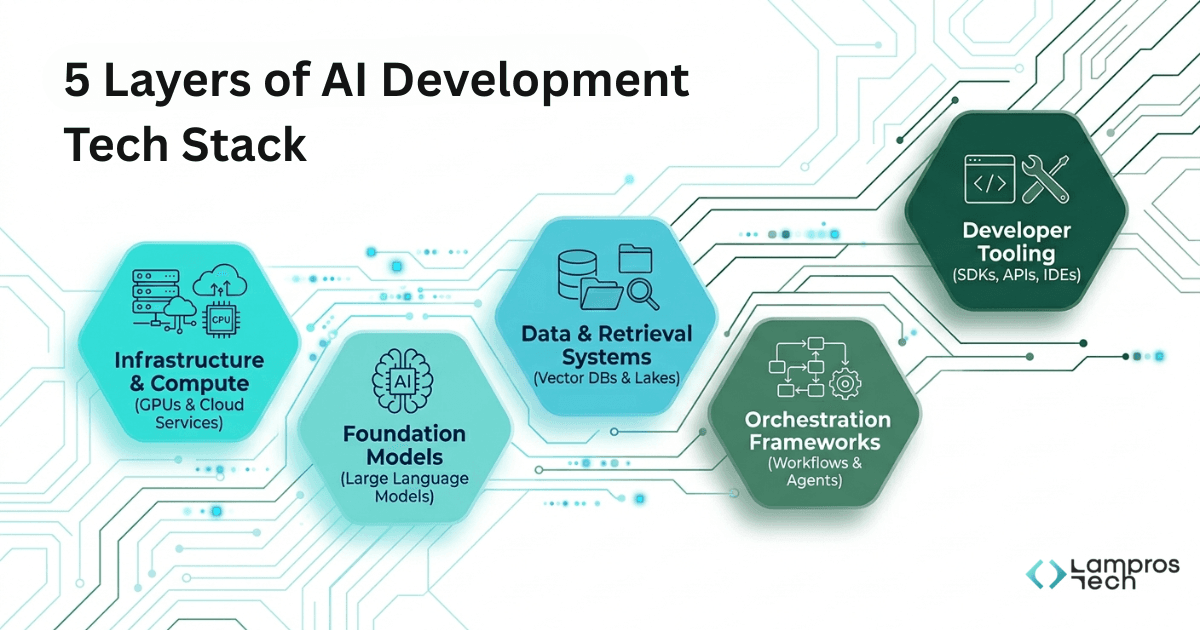

AI systems are built using multiple tools that serve different roles in the development process.

Engineering teams typically organize these tools into five layers:

compute infrastructure, foundation models, data and retrieval systems, orchestration frameworks, and developer tooling.

Each layer solves a different problem in building production AI applications.

Most modern AI systems follow a stack like this:

AI models require GPU infrastructure and scalable computing environments.

Engineering teams commonly run workloads on:

Each offers managed compute, model hosting, and scaling infrastructure that removes the burden of managing physical hardware.

For teams that need more flexibility or lower inference costs, specialized platforms like RunPod, Together AI, and Modal are also becoming serious alternatives, particularly for deploying open-weight models at scale.

These tools allow engineering teams to run AI systems at scale without managing physical infrastructure.

Most teams don't build models, they build on top of them.

Engineering teams typically use models from providers such as:

These models provide the reasoning and generation capabilities that power everything from conversational interfaces to document summarization and code generation.

The decision here isn't just which model performs best on a benchmark. It's about reliability, pricing at scale, context window size, and how well a model handles your specific use case.

Most mature teams end up working with more than one.

Out of the box, AI models know nothing about your product, your customers, or your internal documentation.

Engineering teams use retrieval systems and vector databases to provide models with relevant context.

Common tools include:

These tools allow AI systems to retrieve relevant documents, product data, or internal knowledge before generating a response.

This approach is widely known as retrieval-augmented generation (RAG).

AI systems rarely involve a single prompt and a single response.

They involve chains, retrieve data, call an API, validate output, generate a response, and handle failure.

Orchestration frameworks are used by engineers to manage these workflows.

Common frameworks include:

These tools allow developers to build structured AI workflows rather than relying on isolated prompts.

Shipping an AI feature is one thing. Knowing whether it's performing well in production is another.

Engineering teams commonly use:

These tools help teams evaluate model performance and operate AI systems in production environments.

A deeper look at the modern AI tech stack engineering teams use shows how these components work together in production environments.

The next section explores the architectural patterns used to design modern AI systems.

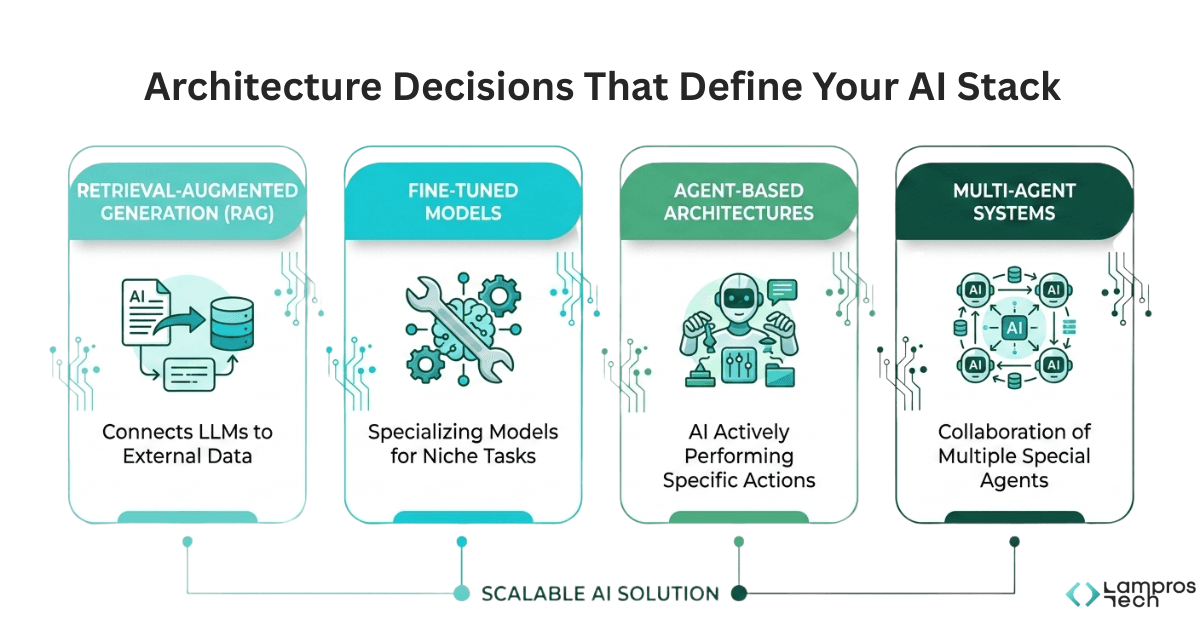

Once the tools in the AI stack are in place, the next decision is how they are structured together.

AI systems can be built in different ways depending on how models interact with data, tools, and application workflows. In practice, most production AI systems follow a few common architectural patterns.

These patterns determine how reliable, scalable, and flexible an AI application becomes over time.

Retrieval-augmented generation connects your AI system to external data like documents, databases, and knowledge bases, so the model can generate responses grounded in real, current information rather than relying solely on what it learned during training.

Engineering teams use RAG architectures to:

This pattern is widely used in internal knowledge assistants, documentation search tools, and AI copilots.

Sometimes the base model isn't enough.

Fine-tuning takes a foundation model and trains it further on domain-specific data, teaching it the language, tone, and patterns of a particular industry or task.

Fine-tuning works well for:

While fine-tuning can improve performance for specialized tasks, it also requires additional infrastructure for training, versioning, and model updates.

In an agent-based system, the model can call APIs, interact with external tools, execute multi-step tasks, and respond dynamically to what it encounters along the way.

Teams often use agent-based architectures to:

This approach enables AI systems to operate as active components inside applications rather than passive response generators.

For genuinely complex workflows, teams are increasingly splitting responsibility across multiple specialized agents, one for research, one for reasoning, one for execution, and one for summarization.

Each agent handles what it's best at.

Together they tackle workflows that would be too brittle or too slow for a single model to manage alone.

The coordination overhead is real, though. Multi-agent systems introduce new challenges around sequencing, failure recovery, and monitoring that single-agent systems simply don't have.

They're powerful when the complexity is justified and overkill when it isn't.

And, understanding how to choose the right AI architecture and framework can help engineering teams avoid costly redesigns as their systems scale.

Getting access to AI was never the hard part. Building something reliable around it is.

A few things that actually matter:

There's a reason 88% of companies use AI but only 39% see meaningful results. The gap isn't about model quality. It's about system design.

The next phase of AI development belongs to teams that treat it as an engineering discipline, not a capabilities race.

At Lampros Tech, that's exactly the work we do. If you're past the experiments and ready to build something that holds up in production, let's talk.

The modern AI tech stack has five layers: infrastructure and compute on AWS, Azure, or Google Cloud; foundation models from OpenAI, Anthropic, or Meta; data and retrieval systems using vector databases through RAG; orchestration frameworks like LangChain; and developer tooling for monitoring and evaluation. Each layer solves a distinct problem in building production AI systems.

Retrieval-augmented generation (RAG) connects a language model to external data sources, such as documents, databases, or knowledge bases, so it generates responses from real, current information rather than training data alone. Engineering teams use RAG to improve accuracy, reduce hallucinations, and keep AI outputs current without retraining the model.

Agentic AI systems plan tasks, use tools, and complete multi-step workflows autonomously, unlike standard AI models that respond to a single prompt and stop. Agentic systems can call APIs, retrieve data, and execute actions with minimal human intervention, making them suitable for automating onboarding, support, and internal workflows.

RAG connects a model to external data at inference time, best for current or proprietary information. Fine-tuning trains a model further on domain-specific data, best for consistent, specialised behaviour like customer support automation or document classification. RAG is faster to implement. Fine-tuning delivers stronger specialised performance but requires significantly more infrastructure.

Most AI initiatives fail due to system design weaknesses rather than model quality. According to McKinsey, 88% of organisations use AI but only 39% report meaningful results. Common failure points include poor data pipelines, no monitoring or guardrails, and over-reliance on a single vendor. Teams that treat AI as a systems design discipline consistently outperform those focused on model selection.

Build AI Systems That Actually Work in Production

Move beyond experiments. Design AI systems with the right architecture, data, and workflows to deliver real business impact.

Let’s Talk